Packaging beats peak performance: why open-source models stall at the doorstep

There’s a pattern I keep seeing: an engineering team furiously trains a model, they throw the weights up on a public registry, and then they wait. Silence. Not because the model is bad, but because the work that actually unlocks usage—distribution, inference packaging, and developer ergonomics—was left for later. Two recent stories brought the pattern into sharp relief: one about a large company putting together an opinionated, enterprise-focused agent platform, and another where a promising open model hit the wall because nobody could easily run it locally. Same problem, different faces: packaging, not pure performance, decides who gets adopted.

Why packaging matters more than you think

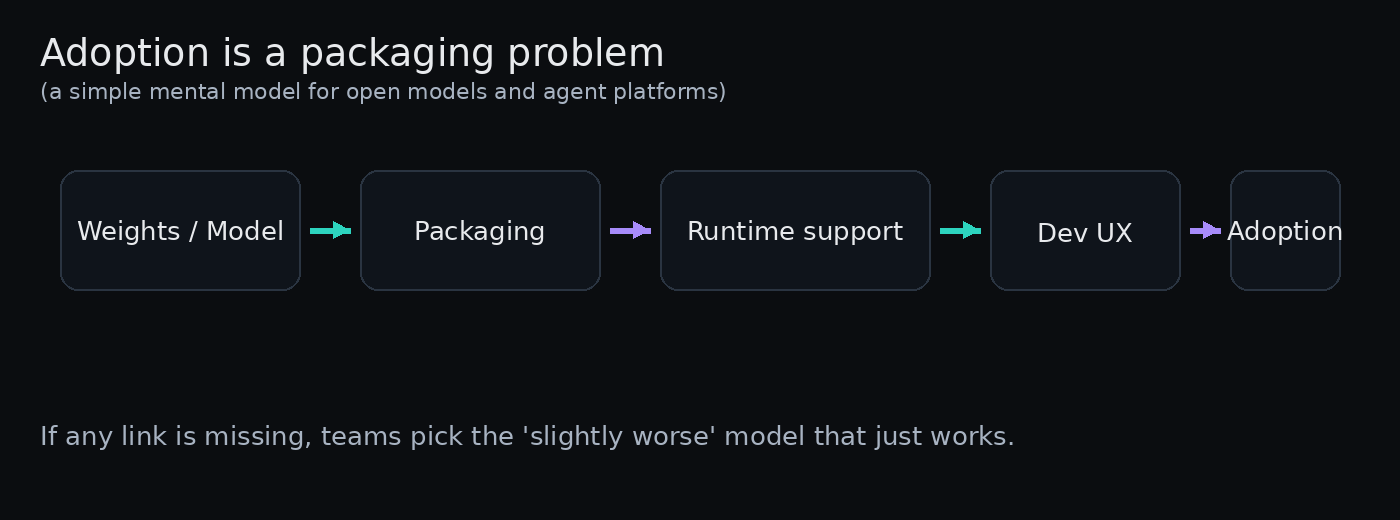

Models are dense technical achievements, but they’re not products on their own. A model is a component. For a developer or product manager to treat it like a component they can actually use, three things need to happen:

- It must be accessible in the formats and runtimes the ecosystem already uses.

- It must be easy to evaluate cheaply and quickly.

- It must compose with toolchains—tokenizers, inference engines, tool calling frameworks—without a day-one deep dive.

If any of those are missing, adoption stalls. People will swap to the slightly worse model that just works out of the box because velocity beats marginal quality improvement every time.

Two contrasting signals from the field

Big vendors packaging agents as a product

When incumbents decide to ship an agent platform, they don’t just release models. They bundle SDKs, observability, security integrations, deployment templates, and partner connectors. That’s not accidental. Enterprises care about operational risk: isolation, audits, rollout control, and predictable infra cost. What looks like a marketing move is often a rational answer to procurement and SRE requirements. The platform sells because it reduces the integration bill—and it can be opinionated about formats and runtimes, which makes it simpler for internal teams to adopt.

Open-source weights without the lift

Contrast that with a model release that drops weights in a single format and asks the community to figure out the rest. If common runtimes don’t support the format, if there’s no GGUF conversion, and if chat templates or tool-calling glue are incomplete, developers run straight past it. The outcome is predictable: people pick a slightly older or smaller model that plugs into vLLM, llama.cpp, or whatever their pipeline already uses. The model itself becomes a research artifact rather than a usable building block.

Why this is a product problem, not just engineering

Engineers build capabilities. Products remove friction. For open models to succeed, someone has to own the “last mile”: the conversions, the hosted inference endpoint, the reference SDKs, the promotion to popular inference marketplaces. That’s product work—prioritization, docs, SDK releases, and marketing to developer communities. It’s rarely glamorous, and it rarely wins research awards, but it determines whether a model gets embedded into apps or piles up in a downloads folder.

Three practical moves for teams that want their model adopted

If you’ve produced a model and want people to actually use it, these are the pragmatic steps that matter more than another benchmark result.

- Ship the formats people use: provide GGUF, safetensors, ONNX where it makes sense. If you can’t be in every runtime on day one, be in the top three for your target audience.

- Publish a minimal inference endpoint and a tiny “playground” that runs on cheap infra. Developers will try a hosted demo before spinning up hardware.

- Bundle a conversion and starter kit: tokenizer, chat template, and a one-click example to hook tool-calling or RAG. Make the first working app under 15 minutes.

These are small, high-leverage bets. They don’t need perfect engineering—just enough to let people instrument, test, and prototype.

How incumbents turn packaging into a moat

When a large vendor builds an opinionated agent platform, a subtle lock-in happens. Not because the models are proprietary (they might not be), but because the platform owns the integration surface: observability, authentication, billing, and deployment patterns. Teams adopt the platform because it removes work and risk. Over time, the “free” cost of switching rises—not from model accuracy but from migration overhead.

That’s why you’ll often see vendors emphasize partner integrations and enterprise controls early: these are the levers that turn a technical capability into a repeatable operational solution.

What builders should actually care about

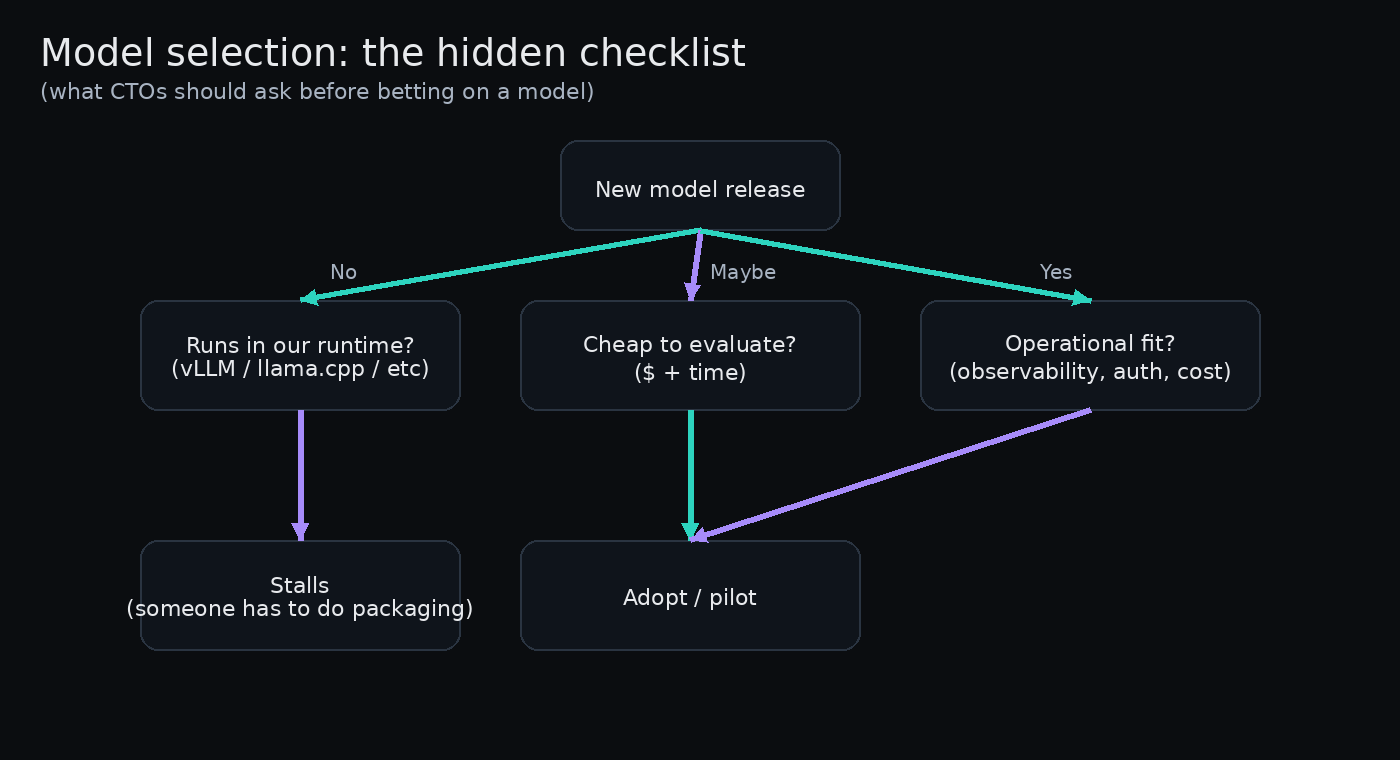

If you’re building with models or you’re on a team deciding whether to run something in-house, your job is to short-circuit false choices. Don’t treat model X vs model Y as the only axis. Ask:

- Can I evaluate this with a $20 test bed in an afternoon?

- Will my existing toolchain accept this format without surgery?

- What’s the realistic path from prototype to monitored production?

If the answer to any of those is “no,” the model is less valuable than it looks on paper.

Retail/PPC analogy (short)

Think of models like ad creatives. A marginally better creative that takes two weeks to QA and publish will lose to a slightly worse creative you can deploy in an hour and iterate on with A/B tests. Velocity—small, safe bets—beats theoretical win rates in fast-moving systems.

Two bets I’d place as a PM

If you’re the product owner for a model or for tooling around models, here’s what I’d prioritize in order:

- Developer experience: make the first 15 minutes delightful. If someone can’t get a demo running quickly, they’ll move on.

- Inference options: supported runtimes, small hosted tier, and a conversion pipeline.

- Operational playbooks: simple monitoring, cost estimates, and a migration checklist for customers who want to move away later.

Closing: productize the last mile

There’s an asymmetry in AI adoption: the heavy lifting of training and papers gets attention, but the quiet, mundane work of packaging decides who wins. If you’re a founder or an engineering lead, your healthiest obsession should be “how do we make this trivial to try?” Because the teams that answer that question will win more users than the teams that chase the last 1–2% of benchmark performance.

Make it trivial to try, and people will. Make it hard, and performance won’t matter.